Accurately count LLM tokens for GPT and Claude models. Analyze text length, visualize token distribution with color coding, and optimize your API prompts.

AI Generation Prompt

AI LLM Token Counter and Text Analyzer

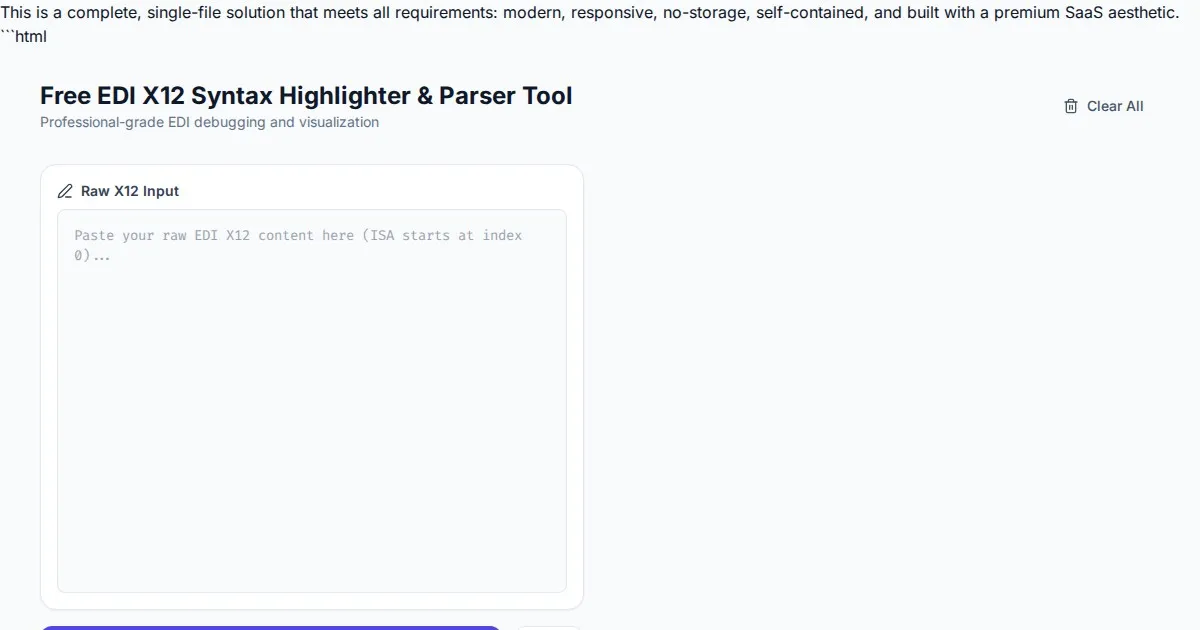

This application is a specialized client-side utility designed for developers and prompt engineers to analyze text input for Large Language Models (LLMs).

Visual Design and Layout

- Color Palette: A professional, high-contrast palette featuring a slate-grey sidebar, crisp white workspace, and a spectrum of soft pastels (mint, lavender, peach) for highlighting tokens.

- Layout Structure:

- Header: Contains the app title, a model selection dropdown (e.g., GPT-4o, Claude 3.5), and a reset button.

- Main Workspace: A split-screen layout with an 'Input Editor' on the left and a 'Visual Tokenization' preview on the right.

- Footer: A live statistics bar showing character count, word count, and current token count.

Animations

- Token Rendering: As the user types, tokens are rendered into the preview pane with a subtle 'fade-in' animation.

- Dynamic Updates: Token counts animate from the old value to the new value using a count-up transition effect.

Interactive Features

- Live Input Processing: The application uses debounced event listeners to re-tokenize the input text in real-time without refreshing the page.

- Color-Coded Token Highlighting: Each token segment is wrapped in a span with a unique background color, alternating to show clearly where one token ends and the next begins.

- Model Selection Engine: Users can toggle between different tokenization standards (e.g., cl100k_base or p50k_base) to ensure accuracy across different model families.

- Copy-to-Clipboard: A single-click feature to copy the tokenized array or the original text for prompt optimization.

- Dark/Light Mode: A toggle to switch between a high-visibility light mode and a low-light dark mode for late-night coding sessions.

Technical Implementation

- Performance: Entirely client-side using Web Workers to perform tokenization off the main thread, ensuring the UI remains responsive even with large blocks of text.

- State Management: A lightweight local state stores the user's input and current model settings.

Spread the word

Files being used

Frequently Asked Questions

Everything you need to know about using this application.

How does the token counter determine the count?

The tool uses industry-standard byte-pair encoding (BPE) algorithms to tokenize text exactly how major language models process input sequences.

Why is the text colored during analysis?

Color coding highlights individual token boundaries, allowing you to visually see how complex words or punctuation marks are segmented by the model.

Can I use this for API cost estimation?

Yes, by providing the exact token count, you can calculate potential costs for various LLM API providers based on their current pricing per 1,000 tokens.